CODED BIAS is the newest film by documentarian Shalini Kantayya (CATCHING THE SUN), which made its world premiere at the 2020 Sundance Film Festival in the U.S. Documentary Competition, and was set to play at SXSW before the festival’s cancellation. Inspired by the research of MIT Media Lab computer scientist Joy Buolamwini, CODED BIAS illuminates the invisible inequalities embedded in the infrastructure of code, and how they affect our lives. The film received development support from the Alfred P. Sloan Foundation. We spoke to Shalini Kantayya to learn more about her perspective on the issues and work on the film.

Science & Film: How did you first come across Joy Buolamwini’s work?

Shalini Kantayya: I am a science fiction fanatic, and also, as a hobby, I imagine the future, so I tend to research disruptive technologies and the ways that they impact inequality or equality. I came across Joy’s work and Cathy O’Neil’s work and Zeynep Tufekci’s work on their TED talks and thought there was a story there.

A common theme with many of the thought leaders in the film is that they are both very astute–technically and scientifically–and at the same time, many of them have this outsider perspective, whether it’s [because of] being a woman, or being a foreigner, or being of color and not having the computer recognize your face. Many of the thought leaders in my film had these experiences, both being able to see inside of this very exclusive industry, and also being an outsider, so being able to bring this rare kind of humanity to the technology.

It was a challenge to represent so many intellectual, heavyweight data scientists and mathematicians, and to try to respectfully communicate their research and ideas in small bites. That was very challenging. I think that’s why I appreciate Joy so much, because I feel that she’s so uniquely positioned to show the world where these technologies fall short, and where we could be more ethical and more humane.

S&F: What was the main issue that you wanted to highlight?

SK: These technologies, whether we are aware, or whether we have been sleepwalking through rapid developments in technology, are changing our world–transforming healthcare, criminal justice, who gets the job, and who is in our [online] dating feed. We haven’t given a lot of thought to how these systems should work, and the people who are designing them are a very elite few who are designing for everyone. The film, first of all, seeks to examine whether that’s healthy for a democracy. And also, how we’re using these technologies and how we govern them.

What I’m proud of and what I love about the film is that these women are making a difference in the world; they are fighting for a more humane and ethical use of the technologies that will shape our future. In the making of the film I really did see how a few individuals can change the conversation, like Silkie Carlo with Big Brother Watch in UK, even though the UK has just adopted use of real-time facial recognition. But for a long time, it was literally those three people who were holding that [organization] up in the UK, just by challenging and observing what was happening. This is something that we should be concerned about here in the U.S.: we have no data privacy laws and rights. What I learned in the making of the film is that data rights are really human rights, and you see that for the people who have been harmed by A.I. bias in the film.

I’m a TED fellow, [so] I speak to a lot of people who work in the technology industry, and I think that they are often well-intentioned and also very unaware because they’re in an elite bubble of makers. I think that we all have blind spots, and whether it's just because these technologies are so widely deployed at scale [or not], it’s really important that we think about unintentional harm before these things are deployed at scale.

S&F: I’m sure you're aware, there have been few timely developments in relation to this technology at the time your film was having its premiere at Sundance, namely being all the press around the facial recognition technology. I’m curious how making this film has changed the way you read about these developments and your relationship to the industry?

SK: It’s totally changed my way of thinking about the world. I knew nothing about this! [laughs] There are six PhDs in my film and I’m a filmmaker; I knew nothing when I began this film and I sort of stumbled down the rabbit hole. One, I think we all take for granted that these [technologies] are neutral and don’t have bias. Because I’m a layperson, I really hope that the strength of the film is to make this stuff barstool conversation. That’s what science should be–it should be a barstool conversation! I really believe that. I’ve learned great science on barstools. I’m not kidding, I’m just very fortunate that way. So that’s what the film seeks to do: empower people with the information about how these systems work. We interact with them everyday of our lives that they are already making automated decisions and giving us an invisible nudge in all kinds of directions.

The other thing [I learned] is about the power of a few people. I’m so grateful to Joy and Cathy and others for the power of their research, for giving me such a great roadmap as a filmmaker, and for challenging big power in that way, through science. I hope that science is not political. Science is science, and I think that Joy’s research findings were so powerful, and I think the power of science is both the validity of the research, and also communicating it to the public so that we can make informed decisions as a democracy. I see the experts—the data scientists and mathematicians like Joy and Cathy in the film—as these amazing researchers that we rely on. My work as a filmmaker is to communicate to the public that bar stool conversation: this is the science and this is the way it’s impacting society’s most vulnerable, and this is what we should worry about as citizens of a democracy.

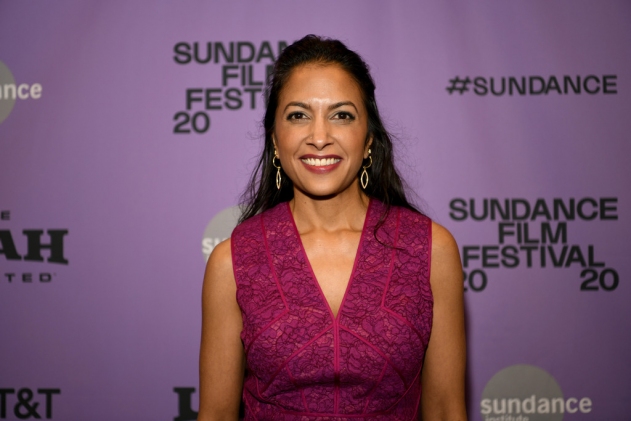

Shalini Kantayya

CODED BIAS is directed and produced by Shalini Kantayya and edited by Kantayya, Zachary Ludescher, and Alexandra Gilwit.

Cover image: courtesy of Sundance Institute

FILMMAKERS

PARTNERS

TOPICS